A UX Design Process with ADDIE

First published by Pat Godfrey: July 7 2017

…

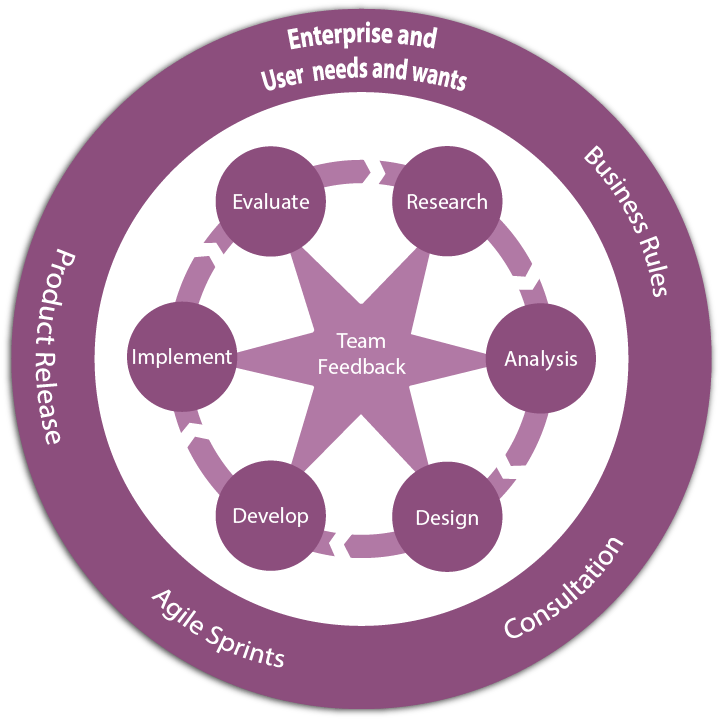

ADDIE (Analysis, Design, Development, Implementation, Evaluation) is a dynamic, cyclic, and evaluative design cycle based on how people learn and think. It focusses and strengthens our, "create, test, and learn" cycles.

It is a meta-process. It is not a dogma. It aids design. It does not rule or prejudice it.

The ADDIE cycle considers our users' performance and tasks, fitting user experience (UX) design well. It benefits digital marketing too!

Shared Learning

If you think this content is useful to your Friends, colleagues, or connections, then please consider flagging it to them.

I love to Experience Learning Too. Your feedback is welcome.

What is ADDIE?

It's just another design cycle. It's happens to make the most sense to me.

ADDIE (Analysis, Design, Development, Implementation, Evaluation) is similar to Herbert Simon's 1969 decision making model.

You may be more familiar with similar cycles?

- The "UX Design Process" Research, Insights, Design Concepts, Test Prototypes, Develop

- "Design Thinking" of Empathise, Define, Ideate, Prototype, and Test?

- Eric Ries's Build - Measure - Learn and Think - Make - Check "UX cycles".

- The 6Ds in the Discover, Define, Design, Develop, Deploy and Drive "UX process".

I find ADDIE a comfortable and flexible methodology applicable across a range of situations. It is easy to share and it actively enhances the design process. It applies to the Big Picture and the finest of details.

ADDIE encourages formative evaluation by promoting a reflective practice suitable to iteration and continual improvement and research rather than a linear model of progression. It also fits Agile Scrum perfectly and (UK) Government Digital Standards (GDS) methodologies well.

A process tool

As ADDIE (Analysis, Design, Development, Implementation, Evaluation) needs some Research to analyse, perhaps we should refer to (R)ADDIE?

The (R)ADDIE cycle eddies around and inter-relates to or disrupts other stages in the design and development process.

Analysis should be one of our greatest efforts. Scrimp here, and quality will suffer.

Research

Analysis ☝

Design

Development

Implementation

Evaluation

How ADDIE fits UX

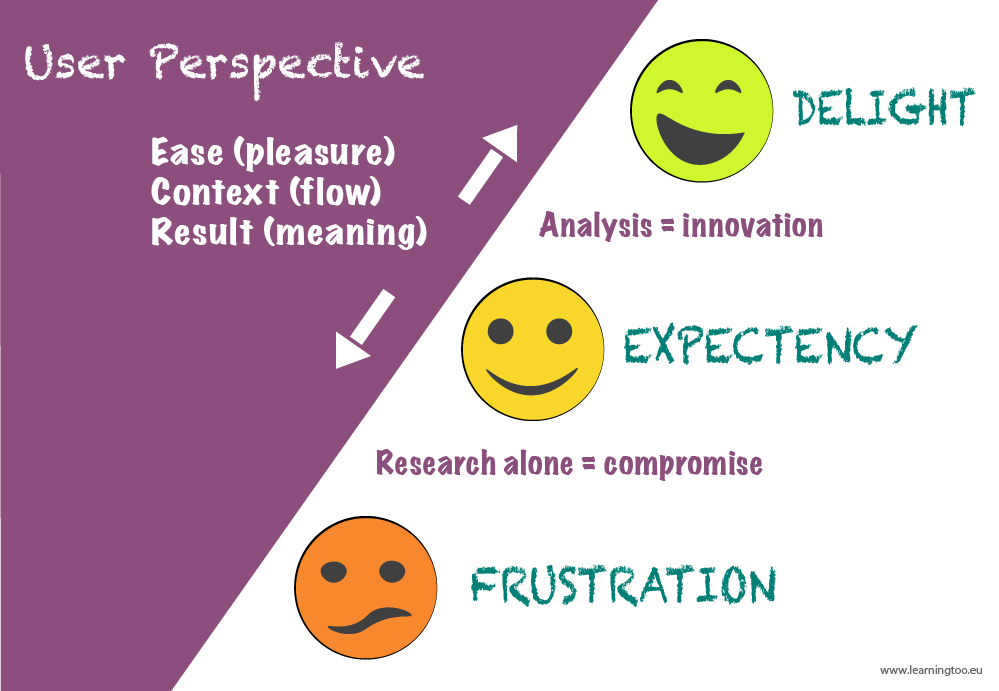

The (R)ADDIE design cycle’s emphasis and priority on Research and importantly on Analysis supports our ambition to deliver delightful experiences.

The ADDIE processes' cyclic nature steers a design beyond what our users expect. It doesn’t only fix a "flag in the sand" to aim for, but constantly re-targets exactly where that flag should be. Our design can flex and evolve intelligently toward offering our users:

- Ease of effort.

- Context to their tasks.

- Give results or feedback.

Research alone cannot improve design. The greater our analysis the better our design for Ease, Context, and Results.

Note: the Ease, Context, and Results mantra cross-maps reasonably well to Dana Chisnell's, "The Three Levels of Happy Design" (Pleasure, Flow, Meaning), which Jared Spool terms, "3 Approaches to Delight".

Considering the user journey

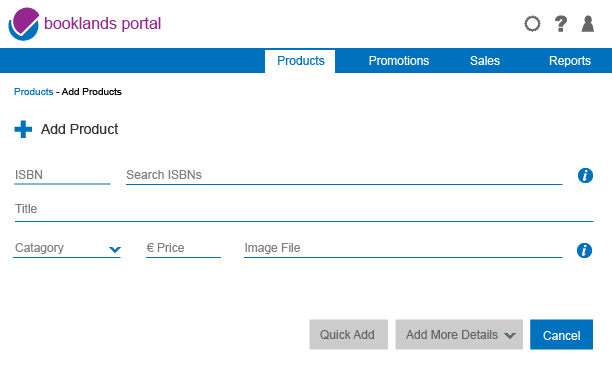

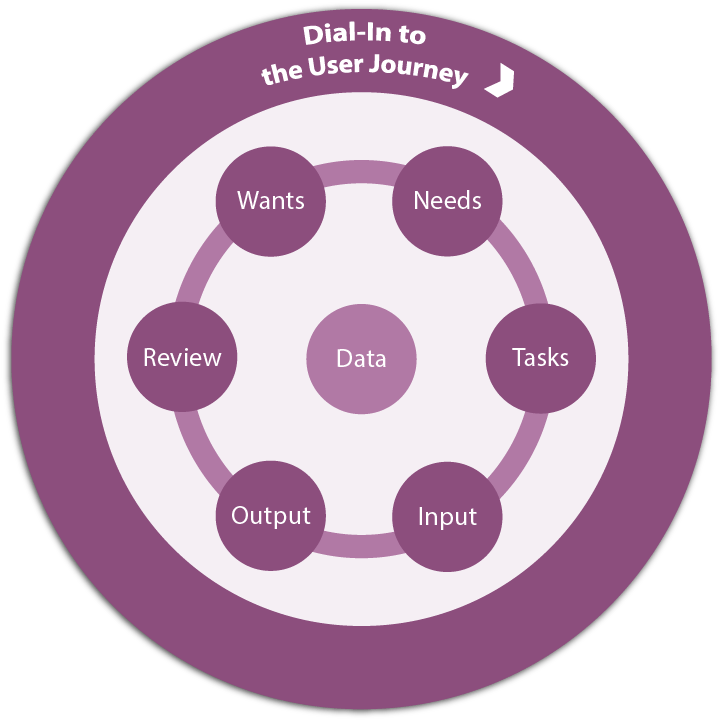

At each stage of the ADDIE process, I ground the work to the basic user journey and the enterprise's values, aims, and objectives. As its name suggests, this is a basic and easy to apply tool: not a deliverable such as a Journey Map or Customer Experience Map, etc.

I developed the Basic User Journey from a number of tools and simplified it to relate our users' interaction with data. It's what we do online. We communicate with our users using data.

I apply my Basic User Journey as an interim Empathy Map, User Journey, and design tool at the macro and micro ends of our workflow. Being cyclic, it eddies down into the finest details – even to individual data inputs. It answers the, "what are we doing here?"

The more research we carry out then the more illuminating this tool is. And it works on pure logic, experience, and intuition, too.

| Journey Stage | Concept |

|---|---|

| Wants | Wants give context to the user journey and enterprise aims. What our user and our enterprise want may not match what is needed. |

| Needs | Needs may indicate requirements, or preconditions that must be met to complete the journey. |

| Tasks | Tasks are discrete objectives formed from needs, against which success may be measured on completion of the journey. |

| Input | What our user and enterprise must actively do or to provide to achieve tasks set during the journey. |

| Output | The result of performing tasks such as knowledge acquisition, product orders being processed, or giving feedback on journey progress, etc. |

| Review | An overview of the status of a task or journey, of any further activity required or set in motion; perhaps an event history or time-line with which to track task or process progress, etc. |

| Recycle | There may be two or more phases: first, that our user and enterprise may access and repeat the journey or tasks as necessary, and second to review the success of each task or journey with a view to identifying improvements that can be made. |

Note: See an application of the Basic User Journey that enabled an 'eleventh hour' rapid understanding of, and design update of a failing UI.

Evaluating, conflict, and change

Conflict should inform our evaluative design process although it may also harm it.

Conflict in teams shouldn't be 'bloody'. Without care, conflict can damage relationships, erode trust, and ultimately cost the product and enterprise. Yet, without conflict and honest exchanges of opinions and data in an open environment, the design may conclude in consensus built on compromises, fear, and even misguided respect.

Designers particularly must be free to conflict with one another and with the team. Such conflict needs managing, of course and steering clear of personal and discriminatory attacks. Sure, there may be emotional wounds and we must learn from them and return reinvigorated by lunchtime, or at the latest by breakfast.

A 100% UX conflict

In a 100% UX team, conflict will be valued and respected. Research will be commissioned and evidence sought to seek the best outcomes. Learning will be shared in the spirit intended.

An effective team will grow stronger and more adaptable over time. Teams run by ids may suffer permanent damage.

Designers and titans chickens

Designers will almost always conflict between themselves over something or other. At times the conflict will be so trivial you want to bash their heads together—and only in a metaphorical way, of course! You may also see cataclysmic exchanges including salvoes of id. As long as it is not only the loudest voice or largest ego that wins, there is no personality or "pecking order" clashing, and the outcome is positive to the enterprise, then there is no reason it cannot be encouraged.

Designers know that conflict is healthy. And they should know when it is not.

Team managers need to be aware that conflict generally has a cause—and right or wrong, that cause may feed vital insight into a design. Just don't leave two or more designers on their own for too long.

They're [designers] you dolt. Apart from you, they're the most stupid creatures on this planet. They don't plot, they don't scheme, and they are NOT ORGANISED!

Melisha Tweedy, Product Manager.

On free speech

...Restricting speech leads to restricting ideas and therefore restricted innovation—the most successful societies have generally been the most open ones. Usually mainstream ideas are right and heterodox ideas are wrong, but the true and unpopular ideas are what drive the world forward...

...You can't tell which seemingly wacky ideas are going to turn out to be right, and nearly all ideas that turn out to be great breakthroughs start out sounding like terrible ideas... When we move from strenuous debate about ideas to casting the people behind the ideas as heretics, we gradually stop debate on all controversial ideas.

Dan Altman blog, E Pur Si Muove, December 14, 2017 (indicated by Chip Cutter writing on LinkedIn Pulse).

Summary

There's no one-size-fits-all design process. (R)ADDIE is simply one of my tools of choice.

Each designer and enterprise will follow what works for them. It is only essential that whatever the analysis is based on (research or intuition, or both), that it is thorough and not overly compromised by low resources, poor appetite, voluminous voices, or inflated id.

When encouraged, open, and transparent conflict can have a positive impact on design. Conflict resolution must be evidence based and not a compromise that bleeds into our product.

Chickens Designers may need careful observation? And feeding. Biscuits.

Reference this article

Godfrey, P. (Year, Month Day). Article Heading. Retrieved Month Day, Year, from URL